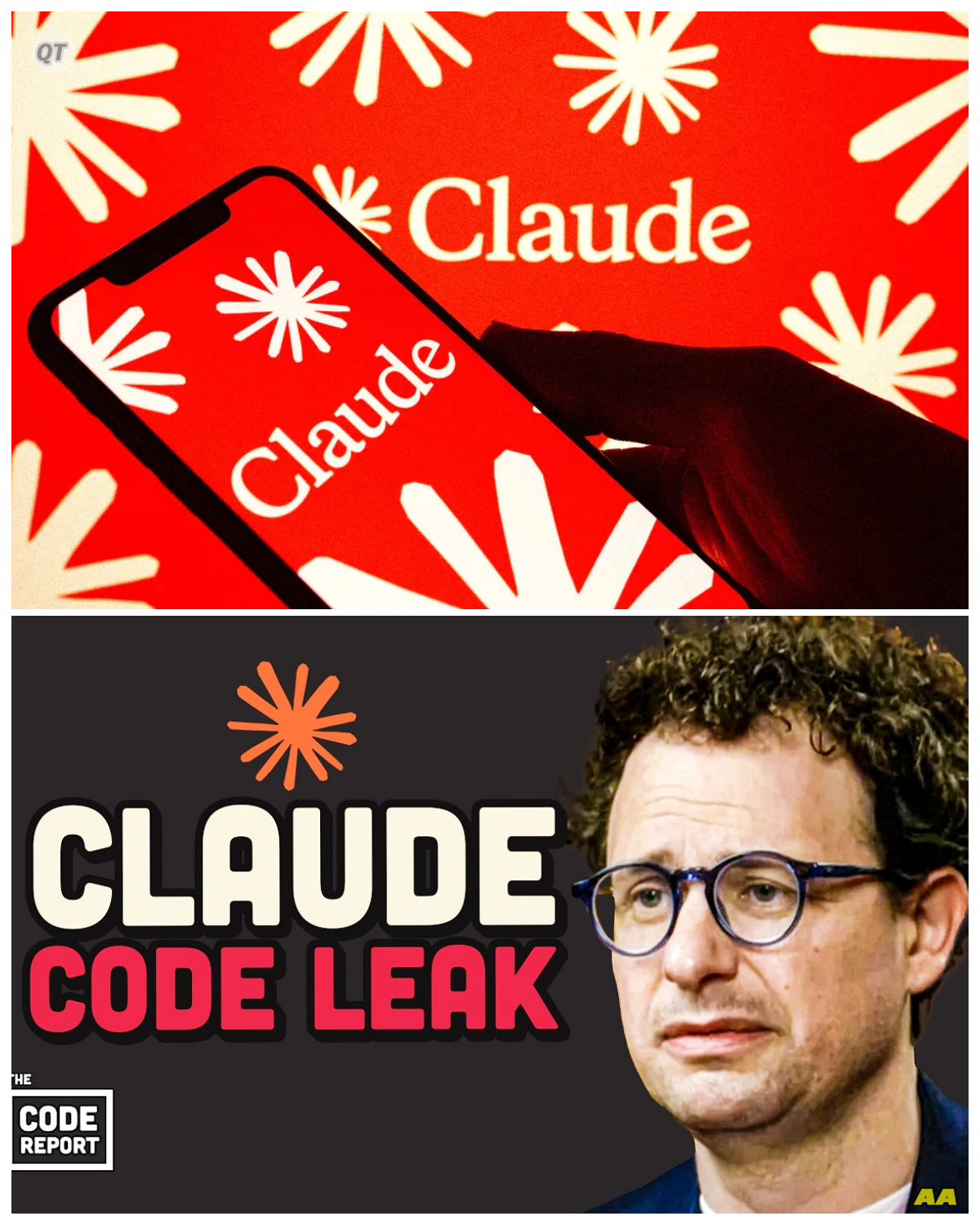

The Catastrophic Leak: Anthropic’s Claude Code Exposed

In a world where technology and innovation reign supreme, the stakes have never been higher.

Anthropic, a leading player in the artificial intelligence arena, has found itself at the center of a scandal that could alter the landscape of AI development forever.

A tragic mistake has led to the unintentional leak of Claude’s source code, sending shockwaves through the tech community and raising questions about security, ethics, and the future of AI.

What happens when a single error leads to a cascade of consequences, and how does that reflect the precarious nature of technological advancement?

The leak, which has been described as catastrophic, unveiled sensitive information about Claude, an advanced AI model designed to compete with the likes of OpenAI and Google.

As the details emerged, the implications of this blunder began to sink in.

![LEAKED - Claude Code's Source Code Leaked Twice [Anthropic's Worst Nightmare]](https://i.ytimg.com/vi/km4UPL7fnII/hq720.jpg?sqp=-oaymwEhCK4FEIIDSFryq4qpAxMIARUAAAAAGAElAADIQj0AgKJD&rs=AOn4CLDzuagdkuRXXOecNLVWwhBeRVZ50w)

What does it mean for a company to lose control over its intellectual property, and how can that impact the competitive landscape of AI development?

The source code leak exposed not only the inner workings of Claude but also unreleased features that had been kept under wraps.

Among these features were the much-discussed Undercover Mode and a Frustration Detector, tools that could revolutionize the way AI interacts with users.

What happens when groundbreaking technology falls into the wrong hands, and how does that threaten the very foundation of trust between developers and users?

As the tech world scrambled to analyze the leaked code, experts weighed in on the potential ramifications.

The revelation of Claude’s capabilities raised eyebrows and sparked debates about the ethical implications of AI technology.

What does it mean to wield such power, and how can developers ensure that their creations are used responsibly?

Anthropic’s leadership faced intense scrutiny as they navigated the fallout from the leak.

In a statement, they expressed deep regret over the incident, emphasizing their commitment to security and the responsible development of AI.

Yet, the damage was done.

What happens when a company’s reputation is put on the line, and how do they rebuild trust in the aftermath of such a significant breach?

As the story unfolded, it became clear that this leak was not just a technical failure; it was a reflection of the vulnerabilities inherent in the rapid advancement of AI technology.

The pressure to innovate often leads to corners being cut, and in this case, it resulted in a catastrophic oversight.

What does it mean for the future of technology when the race for progress outpaces the measures taken to protect it?

The tech community reacted with a mix of shock and concern.

Developers and researchers alike began to question the security protocols in place at Anthropic and the broader implications for the industry as a whole.

What happens when the very foundation of trust erodes, and how can companies restore faith in their ability to safeguard sensitive information?

As analysts dissected the leaked code, speculation ran rampant about what this could mean for Claude and its competitors.

Could this blunder provide an opportunity for rival companies to capitalize on Anthropic’s misstep?

What happens when the competitive landscape shifts dramatically due to a single error, and how do companies adapt to survive?

In the days following the leak, discussions about AI ethics and responsibility took center stage.

The incident served as a stark reminder of the potential consequences of unchecked technological advancement.

What does it mean for developers to take responsibility for their creations, and how can they ensure that they are not contributing to a culture of recklessness?

As the dust began to settle, Anthropic faced a crossroads.

Would they emerge from this scandal stronger, learning from their mistakes, or would they falter under the weight of public scrutiny?

What happens when a company must confront its failures and chart a new course in the wake of disaster?

In the aftermath of the leak, the tech world watched closely as Anthropic worked to regain its footing.

The lessons learned from this experience will undoubtedly shape the future of AI development, influencing how companies approach security and ethical considerations.

What will be the lasting impact of this incident on the industry, and how will it redefine the standards for responsible AI development?

As we reflect on this shocking turn of events, we are reminded of the delicate balance between innovation and responsibility.

The leak of Claude’s source code serves as a cautionary tale for all in the tech industry, highlighting the need for vigilance and integrity in the pursuit of progress.

What lies ahead for Anthropic, and how will they navigate the challenges that come with rebuilding their reputation in a rapidly evolving landscape?

In the end, the story of the Claude leak is not just about a tragic mistake; it is a powerful reminder of the responsibilities that come with technological advancement and the importance of safeguarding the future of AI.

News

Filipina Therapist’s Affair With Married Atlanta Police Captain Ends in Evidence Room Murder – Part 2

She had sent flowers to the hospital. she had followed up. Gerald, who had worked for the Atlanta Police Department for 16 years and had never once been sent flowers by the captain’s wife before Pamela started paying attention, had a particular warmth in his voice whenever he encountered her at department events. He thought […]

Filipina Therapist’s Affair With Married Atlanta Police Captain Ends in Evidence Room Murder

Pay attention to this. November 3rd, 2023. Atlanta Police Department headquarters. Evidence division suble 2. 11:47 p.m.A woman in a pale blue cardigan walks a restricted corridor of a police building she has no clearance to enter. She is calm. She is not lost. She knows exactly which bay she is heading toward. And when […]

In a seemingly ordinary gun shop in Eastern Tennessee, Hollis Mercer finds himself at the center of an extraordinary revelation.

In a seemingly ordinary gun shop in Eastern Tennessee, Hollis Mercer finds himself at the center of an extraordinary revelation. It begins when an elderly woman enters, carrying a rust-covered rifle wrapped in an old wool blanket. Hollis, a confident young gunsmith accustomed to appraising firearms, initially dismisses the rifle as scrap metal, its condition […]

Princess Anne Uncovers Hidden Marriage Certificate Linked to Princess Beatrice Triggering Emotional Collapse From Eugenie and Sending Shockwaves Through the Royal Inner Circle -KK What began as a quiet discovery reportedly spiraled into an emotionally charged confrontation, with insiders claiming Anne’s reaction was swift and unflinching, while Eugenie’s visible distress only deepened the mystery, leaving those present wondering how long this secret had been buried and why its sudden exposure has shaken the family so profoundly. The full story is in the comments below.

The Hidden Truth: Beatrice’s Secret Unveiled In the heart of Buckingham Palace, where history was etched into every stone, a storm was brewing that would shake the monarchy to its core. Princess Anne, known for her stoic demeanor and no-nonsense attitude, was about to stumble upon a secret that would change everything. It was an […]

Heartbreak Behind Palace Gates as Kensington Palace Issues Somber Update on William and Catherine Following Alleged Cold Shoulder From the King Leaving Insiders Whispering of a Deepening Royal Rift -KK The statement may have sounded measured, but insiders insist the tone carried something far heavier, as whispers spread of disappointment and strained exchanges, with William and Catherine reportedly forced to navigate a situation that feels far more personal than public, raising questions about just how deep the divide within the royal family has quietly grown. The full story is in the comments below.

The King’s Rejection: A Royal Crisis Unfolds In the grand halls of Kensington Palace, where history whispered through the ornate walls, a storm was brewing that would shake the very foundations of the monarchy. Prince William and Catherine, the Duchess of Cambridge, had always been the embodiment of grace and poise. But on this fateful […]

Royal World Stunned Into Silence as Prince William and Kate Middleton Drop Unexpected Announcement That Insiders Say Could Quietly Reshape the Future of the Monarchy Overnight -KK It was supposed to be just another routine update, but the moment their words landed, something shifted, with insiders claiming the tone, timing, and carefully chosen language hinted at far more than what was said out loud, leaving aides scrambling to manage the reaction as whispers of deeper meaning began to spread behind palace walls. The full story is in the comments below.

A Shocking Revelation: The Year That Changed Everything for William and Kate In the heart of Buckingham Palace, where tradition and expectation wove a tapestry of royal life, a storm was brewing that would shake the very foundations of the monarchy. Prince William and Kate Middleton, the beloved Duke and Duchess of Cambridge, had always […]

End of content

No more pages to load